Machine Learning for Digital Humanities

#00. A personal series on Machine Learning in Digital Humanities

Because of my somewhat hybrid background between machine learning and history, I often find myself in conversations within the humanities where a certain confusion about technological concepts becomes apparent. The most common misconception I encounter is the idea that the entire wave surrounding machine learning can be reduced to systems that generate text, images, or video.

Tools such as ChatGPT, Nano Banana and many others have certainly played an important role in the recent explosion of artificial intelligence. However, they represent only a small part of what machine learning actually encompasses. In fact, one could argue that these generative systems may not always be the most valuable tools when it comes to deepening historical knowledge.

For this reason, it seems worthwhile to bring to the table a series of tools, ideas, and reflections that may help us explore practical applications of machine learning in historical research, while at the same time allowing us to better understand how these methods work and what kinds of questions they can help us answer.

To begin this series, I would like to start with a very simple question:

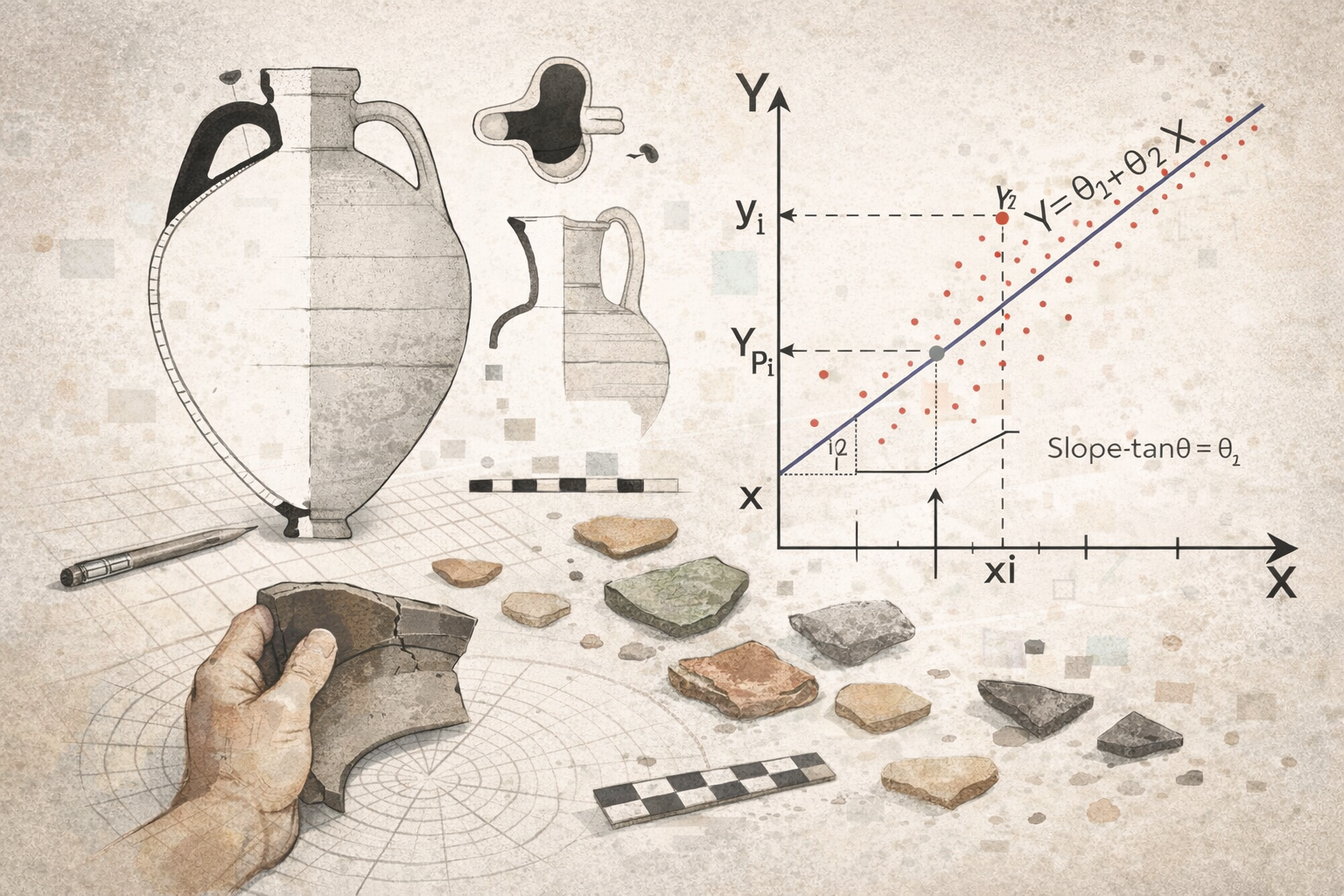

Could we build a model capable of estimating the height of a ceramic vessel if we only know the diameter of its rim?

For now, we will simply take this question as a starting point, without worrying too much about whether it is methodologically perfect. Instead, it will serve as a small experimental exercise that will allow us to observe both the strengths and limitations of this approach.

More importantly, it will help us introduce an idea that I recently encountered while reading Aurélien Géron’s book Hands-On Machine Learning with Scikit-Learn, Keras, and TensorFlow. In it, Géron refers to a well-known paper by David Wolpert from 1996 where the so-called No Free Lunch theorem is discussed. The central idea is quite striking: if we make no assumptions about the structure of the problem we are trying to solve, then all learning algorithms perform equally well on average across all possible problems.

In practical terms, this means that if we approach a problem without any prior understanding of its structure, choosing one algorithm over another is largely arbitrary. A more productive strategy is to first reflect on the nature of the problem itself, identify the kinds of relationships we expect to find in the data, and only then select the algorithms that are most appropriate.

This naturally leads to a new question:

Which algorithm should we actually use in this case?

That is precisely what we will explore in the next post.